In this leading era of machine learning and artificial intelligence. It is very necessary for young developers and programmers to make them familiar with these cutting edge technology of artificial intelligence.

Here in this post, I am gonna discuss a very good and knowledgeable project, that I’ve recently done. It is based on detecting as well as recognizing faces on a given image or a live camera feed.

So, Let’s dig deep into the topic on hand. I will divide all the procedures step by step. So, read this article completely to understand each and everything clearly. I will also give you the full project code at the end of this article.

Prerequisites

Well, there not much needed to understand this project, but below are the bare minimum things you must know:

- Working knowledge of Python language

- Familiar with basic python machine learning libraries like Numpy

- Decent knowledge about machine learning

Introduction

In this project, we will first make a face detector, which detects faces into a given image. It will give us the rectangular coordinates of the faces in the image. Basically, with this, we can extract faces from an image. Able to programmatically tell how many people are in the image and can be used in applications where we need to count people passing through a given door with a live camera feed.

After that, We will use this face detector to extract faces from the given series of labeled images to train our model. After completion of training, which will take some time. We will save our model in the .yml extension file. It will be used for predictions later.

Now, we just need to import our saved model file. And the images or videos on which we want to recognize people — fed them onto the model. And BOOM!! We know who is who there.

There are a plethora of possible applications of this project you can think of. We will talk about this at the end of this article.

At the end of the article, I will also provide you the complete source code of this project.

Libraries Needed

During the project, we are gonna use these three libraries:

- OS: To crawl into the file system and do necessary imports and exports

- CV2: It will be used to handle images and provide all necessary tools

- Numpy: It will be used to do calculations and conversions of data into operational formats

WorkFlow

First, we need to set up our working environment. We will code in the latest version of python that is python 3.7. All the other libraries come with the python setup itself if you have set up the things through the anaconda.

After setting up the environment correctly, one more thing we need to download is the haar cascade frontal face XML file from the internet. Basically, this will be used as the backend to detect faces in an image. It has hardcoded features to detect the frontal face from an image effectively.

Till now, we have completed all the initial setup to get going.

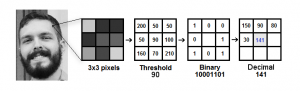

The next thing we are gonna using is the LBPH object of the OpenCV library. It is basically our model for training and prediction to recognize faces on a given image or video. It takes a mask of 3*3 pixels and moves in the image. In that mask, it does thresholding based on the intensity of the central pixel. If the intensity of a surrounding pixel is greater than the intensity of the central pixel, it puts a zero these else 1. Now, after the conversion, the numbers are noted in the clockwise direction to form a binary number. The binary number is then converted into a decimal number and set for the central pixel. In this way, it does for the complete image. After that, LBPH makes a histogram from this data and stores it in the memory. This is pretty much of the training part.

If you want to know more about the OpenCV LBPH classifier, you can visit here.

So, Until now, we have learned about the two important objects we will be using in the program. So let’s move to the implementation part.

Implementation

Before recognizing the faces, we need to first detect faces in an image. For that, we convert the image into grayscale, then import the haar cascade classifier and used its detectMultiScale object. It returns us the rectangular coordinates of the detected faces. With the returned four coordinates, we can draw a rectangle around the faces.

For each face file, the label will be their file names.

Then the next step is to train our classifier with these faces and labels. For that, we create an object instance of cv2.face.LBPHFaceRecognizer_create(). Then we feed the images and labels into it. It will return us a trained model object, which we have to save as a .yml file extension. This files we will use for the further recognition process.

Till now, we have done the most part. Now we only have to read the yml file, which we ave just created and feed frames with detected faces to predict the labels. The fed frames can be a static image or a feed from a camera or just a video. After predictions, we can show the frames with a rectangle around the face and name(that we got from the name dictionary, which we contain names for each numerical label).

You can play around the uncertainty threshold to show the results to best fit your case and tweak the model.

Applications

There was numerous application of this project that anyone can think of. Some of them are listed below:

- Threat detection at airports and hotels

- Smart attendance system

- Advance banking facilities

- Security systems

Github link of this project: https://github.com/shashi1iitk/Face-detection-and-recognition-in-python

Don’t forget to hit star if you like this project.

Also Read: How to upload files in Amazon S3 bucket through python?